Google’s DeepMind tackles weather forecasting, with great performance

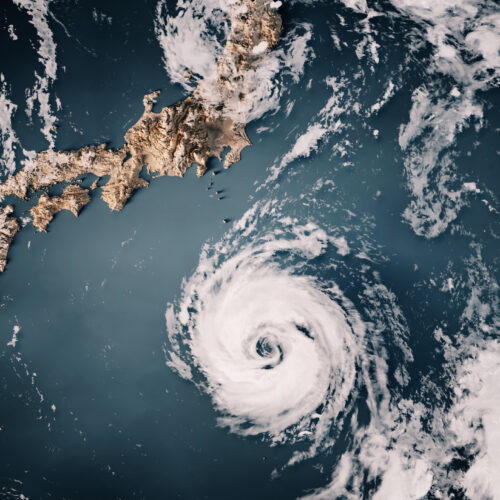

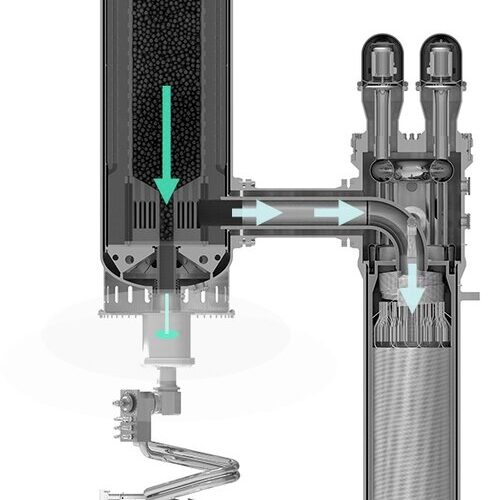

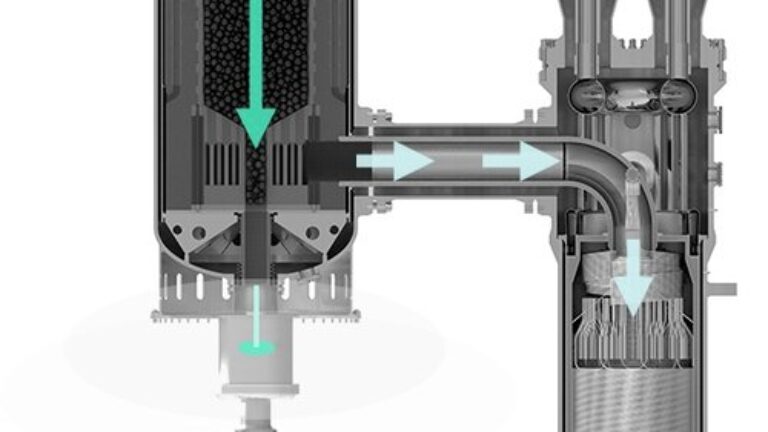

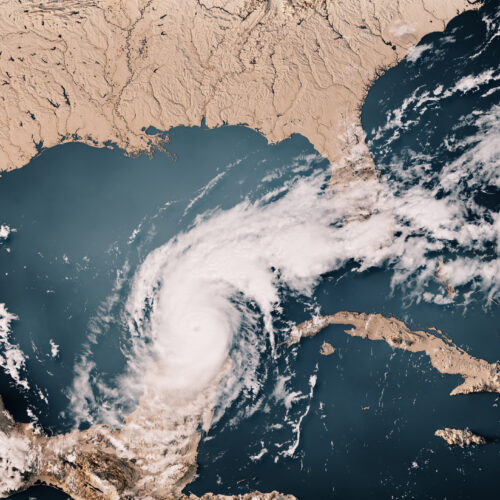

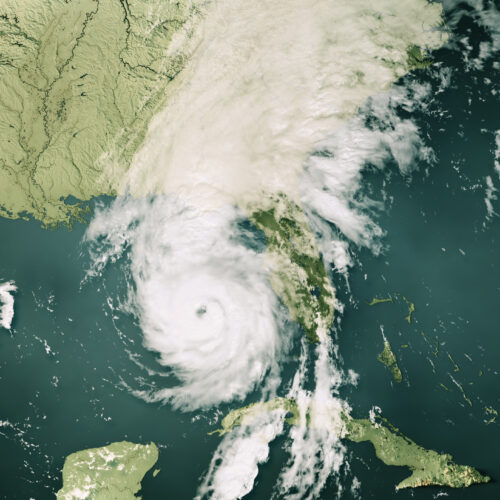

By some measures, AI systems are now competitive with traditional computing methods for generating weather forecasts. Because their training penalizes errors, however, the forecasts tend to get "blurry"—as you move further ahead in time, the models make fewer specific predictions since those are more likely to be wrong. As a result, you start to see things like storm tracks broadening and the storms themselves losing clearly defined edges.

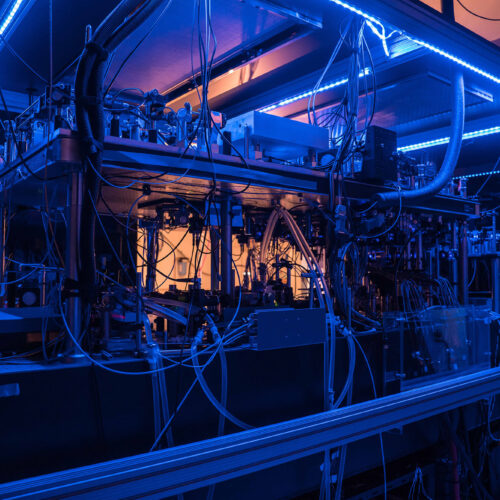

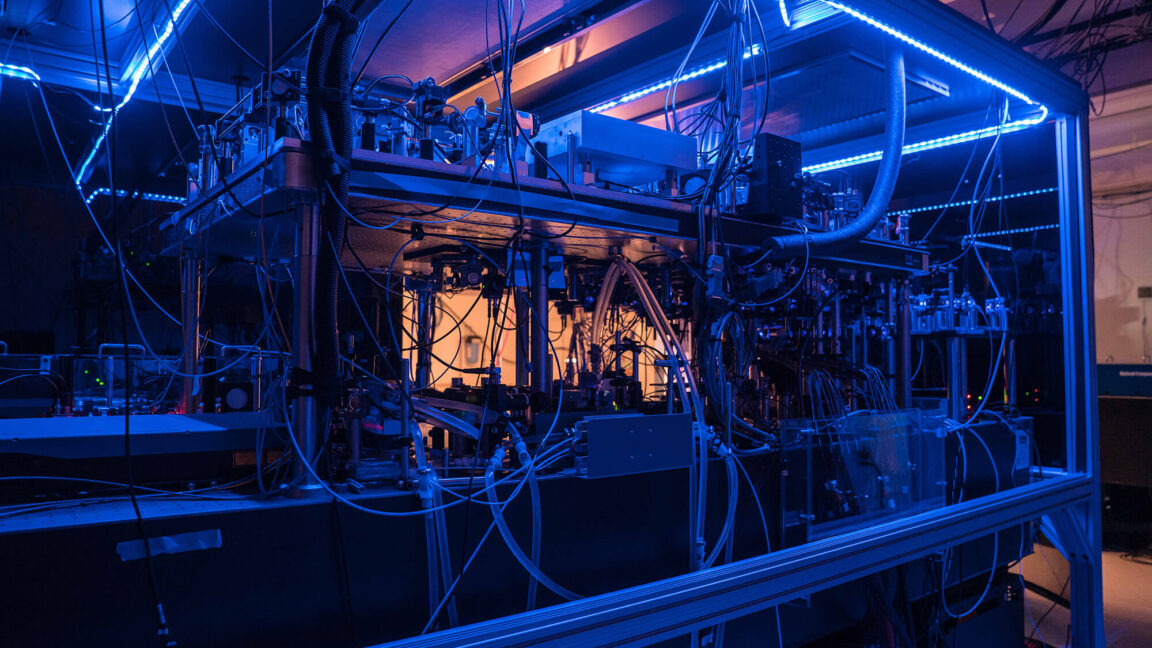

But using AI is still extremely tempting because the alternative is a computational atmospheric circulation model, which is extremely compute-intensive. Still, it's highly successful, with the ensemble model from the European Centre for Medium-Range Weather Forecasts considered the best in class.

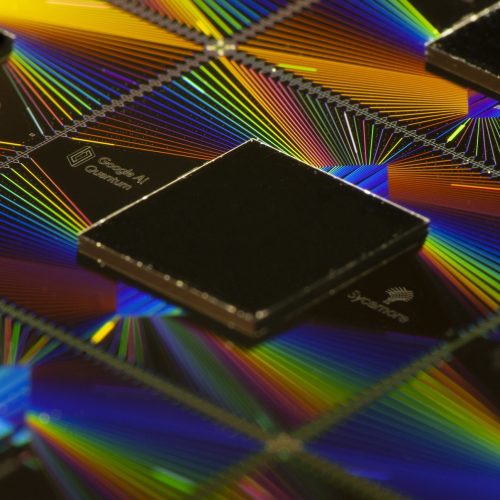

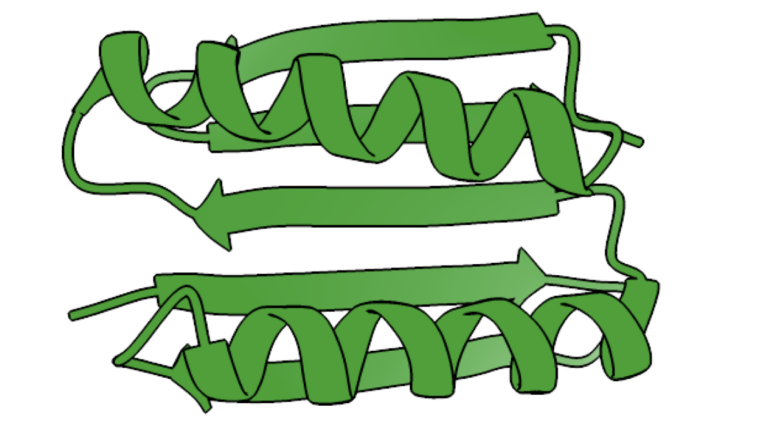

In a paper being released today, Google's DeepMind claims its new AI system manages to outperform the European model on forecasts out to at least a week and often beyond. DeepMind's system, called GenCast, merges some computational approaches used by atmospheric scientists with a diffusion model, commonly used in generative AI. The result is a system that maintains high resolution while cutting the computational cost significantly.